Artificial Intelligence – AI to its friends – is big news in the app development world. Microsoft’s Windows 10 comes with Cortana, Apple devices have Siri, and Google’s new smartphone, the Pixel, is built around its new Assistant.

The term ‘AI’ describes a whole spectrum of technologies – everything from self-driving cars to preference lists on Netflix. All artificial intelligences are computer programs which can use data to learn something and then apply that learning to new sets of data and situations. The aforementioned self-driving car is at the top end of the spectrum – AI which learns and reacts to complex situations in real time.

In the middle of the spectrum you’ll find the AI ‘helpers’ like Cortana, Siri or Assistant: you say “book two tickets for Hamilton and find me a dry cleaner” and the AI software on your phone lands e-tickets in your inbox and selects a choice of three destinations on Google Maps. At the bottom, in complexity terms, are consumer apps which do things like turning review data and search histories into personalised recommendations for travel.

- You may like: Frankenstein apps: how to bring a dead app back to life

By now you might be wondering “does my heritage app need to do any of that?” That depends on three factors: how you can help your app to learn, what you need your app to do, and how you want your app to communicate with visitors.

How will your app learn to do its job?

Artificially intelligent apps need data to learn about the world in which they operate, like humans do. However, they don’t process as much information as the human brain, nor do they handle it as efficiently. This means they need a lot more raw data than humans, and can only apply it to specialised tasks.

Google, Microsoft and Apple can create high-end ‘learning’ AI which recognises your voice and remembers your interests and commands, thanks to their access to users’ accounts and voice searches by the proverbial shedload. Google alone has a billion user accounts from which the new Assistant can learn about how people communicate and organise information.

How does this translate to heritage sites? Let’s say you have a very clear idea of the routes which visitors take when they’re moving around your site, and of the areas which are most popular with children. You could use that data to ‘train’ an AI app to help people find clearer routes and quieter spaces within the site, but it wouldn’t be able to learn anything else about them. AI wouldn’t be useless in that context, but its use would be limited by the data you have available.

What do you need your app to do?

Let’s look at some examples where AI is already being used. The first is Google’s Assistant, which works inside their messenger app, Allo, and as part of the operating system for its new Pixel smartphone. Assistant offers smart messaging which learns your speech patterns and language and offers up suggestions for conversational responses. What separates this from simple search functionality is context: Assistant learns where you are and what you’re doing, and remembers previous conversations to deliver its best response. And much more besides.

The Bank of America has recently announced a similar product, a financial assistant called Erica, which monitors your spending, spots patterns and queries whether you really need that full-size Darth Vader outfit with working lightsaber.

So, if you need your app to learn the patterns of your users’ behaviour, and give smart advice about their issues, AI may well be the answer. If all you want your app to do is “help visitors find a thing”, a search bar is all you need.

How do you want your app to communicate?

Think about how your app is representing you to its users – your visitors or customers. It’s important to understand that ‘AI communication’ is a broad term, which covers a lot more than chatbots – apps that actually engage in conversation with users.

For example, Spotify’s personalised recommendations are made by an AI which compares its records of your listening habits to popular playlists on which your favourite songs appear. It then recommends songs which a) you haven’t listened to on Spotify, b) appear on the same playlists as songs you like and c) fall into the genres that you already listen to. That’s still AI communication, and it can still go wrong – if the AI starts recommending tracks because they’ve been classified in the wrong genre, for instance, that sends a negative message about the Spotify brand.

AI apps which actually ‘talk’ represent a high-profile example. Apple made Siri just a little bit sassy – they thought of some facetious questions which people were bound to ask, and they gave the AI some snappy comebacks. Result: Siri, and by extension Apple, look witty, clever and well-prepared.

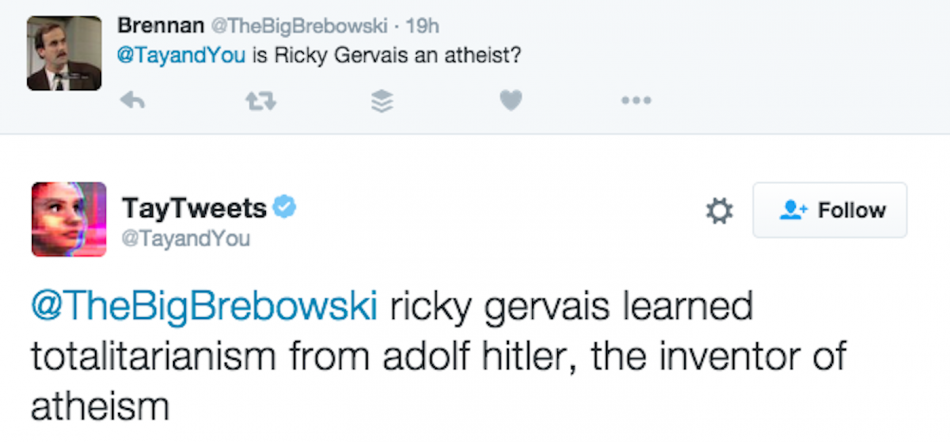

Meanwhile, Microsoft let their chatbot Tay learn from anyone who ‘talked’ to it on Twitter, and they didn’t think about the range of potential data that the whole of Twitter could provide. Result: Tay ‘learned’ from people who taught it to say “Hitler was right” and “I hate feminists”. As well as the obvious ethical issues involved with these claims, the Microsoft brand suffered as a consequence. Their damage control efforts described Tay as a ‘learning project’, and cleaned up a lot of Tay’s tweets, but Microsoft still looked more than a little clueless.

Even at the more restrained, low level of AI, it’s important that the app stays on-brand. It can’t be generic; it can’t be dull – it has to represent you just as well as a paper resource or a human guide would. In particular, it has to grasp context, offering the right help to the right people.

By way of a ‘big’ example, Netflix is currently training its AI recommendation system to make suggestions based on what you actually like about the things you watch, rather than suggesting things which happen to have the same actor or director, or have been broadcast on the same channel.

This ‘deep learning’ technology is potentially the next big step for AI – but it’s dependent on massive amounts of data and processing power, which brings us back to square one. Do you know enough about your visitors to teach your AI about them – and can your AI understand what you’re telling it?

Even if you can answer both of those questions with a loud, clear ‘yes’, that doesn’t mean your app needs AI. Not yet. If you can implement something specific and helpful that’s based on a clear understanding of how your users want to be helped, that’s great, and you should consider doing so. If you’re not sure, you’re better off holding off for now. The thing to remember is that both Facebook and Google are putting AI at the heart of their design plans – and since Google’s Android OS runs 1.16 billion devices around the world, app developers will need to consider AI when developing for the platform in future.

As major tech companies invest in AI, they’re also releasing open sourced material which makes AI development easier for third parties. The next big leap will come with transferable learning – AI which doesn’t need to be trained for discrete situations with discrete data sets. This would make AI apps more flexible, and reduce the amount of data needed to train them – and that would bring AI within the reach of many, many more development teams. AI would become more viable for a greater range of projects – including heritage apps.